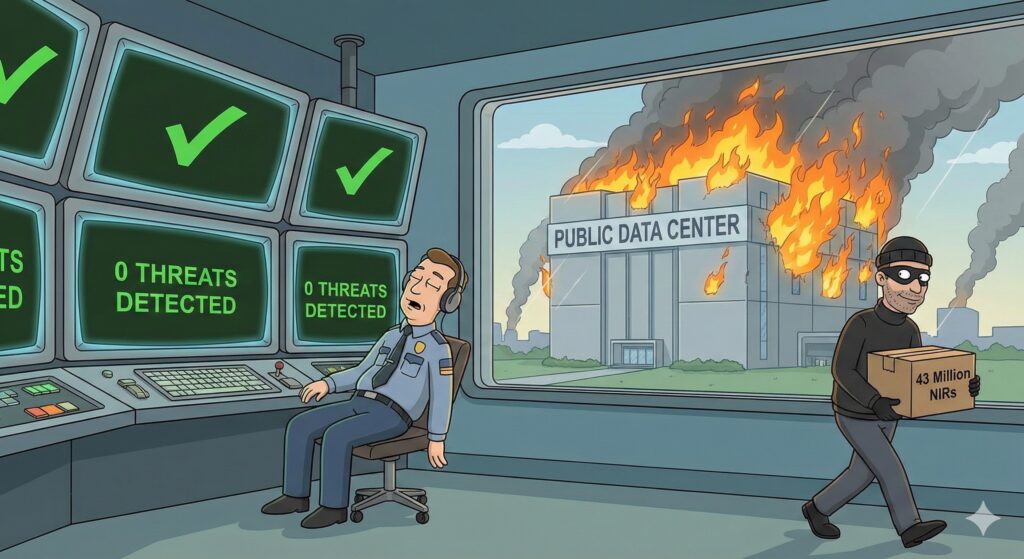

As the 2026 CNIL fines prove, modern social engineering detection is no longer optional. I’ve spent the better part of the last decade staring at dashboards that say ‘All Green’ while the house is actually burning down.

If the recent France Travail disaster—and that spicy €5M CNIL fine (SAN-2026-003) announced in late January 2026—tells us anything, it’s that our “secure” systems are often just very expensive paperweights.

We’re still trying to fight 2026 threats with 1990s logic. Here’s why your static automation is failing and how we’re actually fixing the “20% gap” where the most dangerous leaks live.

1. The “Trusted Partner” Mirage: The Front Door is Already Open

The France Travail breach wasn’t a “Mission Impossible” laser-grid heist. It was a Social Engineering masterpiece. Attackers didn’t hack a server; they “hacked” a human counselor at a CAP EMPLOI partner branch.

The systemic failure here is the Partner Trust Trap. Large institutions rely on expert systems automation—rigid, rule-based logic that says: “If User has Role ‘Partner’, give them the keys.”

But in 2026, the battle of Social Engineering vs. Expert Systems is a slaughter. Static rules cannot detect the subtle “behavioral shift” when a legitimate counselor’s account suddenly starts harvesting 40 million records across 20 years of history. The system saw a valid login and stayed silent while 43 million lives were exported to the dark web.

2. The Internal Rot: When “Admin Privileges” Become a Commodity

We need to talk about the elephant in the room: Insider Corruption. Over the last two years, we’ve seen a rise in public agents—tax officials, clerks, health admins—abusing their internal access to sell data for targeted crimes, particularly crypto-related extortion.

The problem isn’t just “evil employees”; it’s Architectural Archaism. We are still using paper-era processes in digital systems. When a single administrator has “God Mode” access to millions of dossiers without real-time, query-level auditing, the temptation for “data-for-hire” becomes a systemic risk.

French institutions suffer from Privilege Explosion. We treat data from 2004 with the same “hot” availability as data from this morning. This Data Hoarding creates a massive attack surface where a single compromised or corrupted internal account can trigger a national security crisis.

3. The 20% Gap: Why Your “All Green” Dashboard is Lying to You

Look at the patterns from CAF, AP-HP, and France Travail (2024–2026). They all caught the 80% of noisy, obvious attacks but missed the “20% raté.”

This 20% represents the “low-and-slow” anomalies: the trusted agent querying just a bit too much, or the partner login appearing from a new (but “clean”) IP. This is where static automation fails. It catches the brute force but ignores the insider-like behavior of a compromised session.

In my work on Scalefine’s Streaming Analytics Project, I focus on this exact gap. We’ve moved past the era where ai automation for business just meant “make it faster.” Now, it must mean “detect the deviation.” If you aren’t monitoring the context of the query, you aren’t actually secure.

To build a 2026-ready defense against the patterns seen in France Travail or AP-HP, I propose a “Dual-Layer” architecture. This isn’t just about adding more security; it’s about fundamentally changing how humans interact with the data lake.

4. The Architecture of Sanity: Splitting Detection from Automation

In the “old-school” public expert system, security was a thin shell around a massive, messy core. In 2026, we fix this by separating Real-time Behavioral Detection from Intelligent Data Automation.

Layer 1: The Sentinel (Streaming Behavioral Detection)

This layer exists exclusively to catch the “20% raté”—the low-and-slow exfiltration that signature-based tools miss. It doesn’t process benefit claims; it watches the watchers. Using Apache Flink 2.2 for stateful stream processing, we ingest every auth event and query metadata in real-time.

- Access Entropy & Vector Baselines: Flink 2.2’s native

VECTOR_SEARCHandML_PREDICTallow us to convert session patterns into vector embeddings instantly. If a counselor’s query pattern drifts semantically toward “bulk scraper” territory, the system triggers a DORA-mandated kill-switch before the data even leaves the building.

Layer 2: The Proxy (Multi-Agent GenAI Automation)

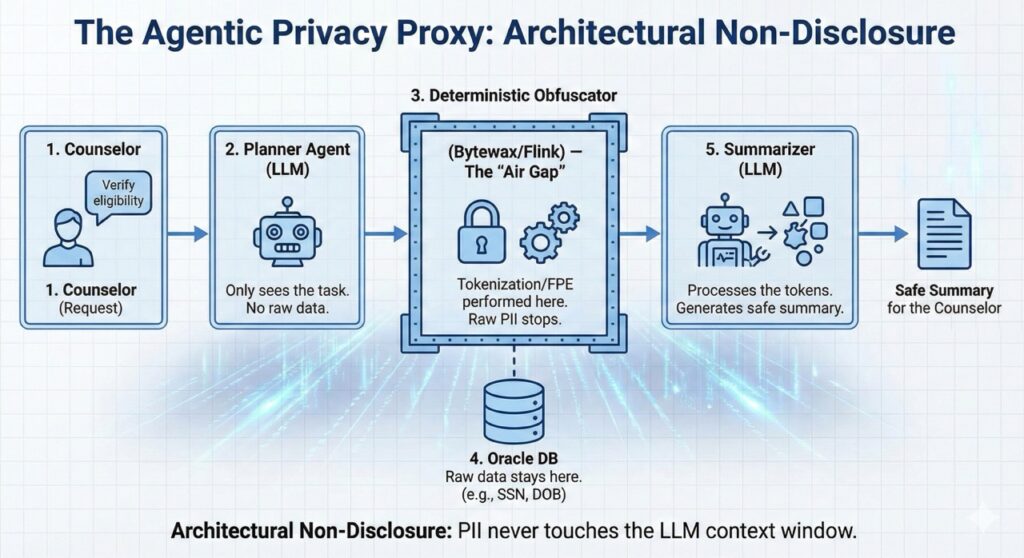

The most effective way to stop a corrupt clerk or a hacked partner is to remove their need to see raw sensitive records entirely. In legacy environments, direct human access is the root cause of these breaches. Instead, we insert a privacy-first multi-agent proxy layer.

Core Principle: Raw sensitive data (NIR/SSN, full histories) never enters any LLM context. LLMs are used only for orchestration and planning. All data transformation happens through deterministic, rule-based components outside the model.

The Improved Privacy Workflow:

- Task-Based Orchestration: The counselor submits a goal (e.g., “Verify RSA eligibility”). A Planner agent (LLM) generates the workflow and calls tools—it never receives or sees the raw data itself.

- Data Access & Obfuscation Layer (Non-LLM): Secure views apply transformations before any data leaves the Oracle layer:

- Tokenization + FPE: NIRs become reversible tokens (e.g.,

@@NIR_TK_784392@@) that look like real data but reveal nothing to the LLM or the human. - Differential Privacy: Aggregated results get calibrated noise (e.g., “Eligibility probability: 82% ± 5%”).

- Tokenization + FPE: NIRs become reversible tokens (e.g.,

- Privacy Enforcement Layer (Deterministic Auditor): A rule-based engine (not an LLM) inspects every output. It uses regex and domain rules to block any potential re-identification. Only after passing this auditor does a Summarizer agent (LLM) receive the safe payload to draft a decision for the human.

- Summarizer & Quality Auditor (Self-Reflection): A Summarizer agent (LLM) receives the safe payload to draft a decision.

Bonus: The Quality Warranty Loop > Before the final summary reaches the human, a Self-Reflection agent critiques the draft against known domain logic. By cross-referencing the summary with the deterministic rule-set, the system significantly reduces the “human-induced operational errors” typical of over-burdened administrations. It ensures the final recommendation isn’t just private—it’s actually correct, providing a layer of quality warranty that paper-era processes simply cannot match.

5. The Tech Stack: Bridging the Gap Between DORA and GDPR

Implementing this requires a 2026 stack that treats privacy as infrastructure, not a checkbox.

| Technology | Role in the Stack | Regulatory Impact |

|---|---|---|

| Apache Flink 2.2 | Stateful Detection Engine: Uses ML_PREDICT to score session risk in real-time. | DORA: Satisfies “continuous monitoring” & rapid response. |

| Redpanda / Kafka | Streaming Backbone: Ultra-fast, low-latency log for all access and agent events. | DORA: Real-time visibility into third-party risks. |

| LangGraph / Langchain | Agent Orchestration: Manages the Planner/Auditor workflow with human-in-the-loop. | GDPR: Enforces “Purpose Limitation” (Art. 5). |

| FPE & Tokenization | Deterministic Obfuscation: Masks raw PII before it hits the intelligence layer. | GDPR: Privacy-by-design & data minimization. |

| Synthetic Data (GenAI) | Digital Twins: Generates non-identifiable data for reasoning and model training. | Non-Disclosure: Breaks the link between signal and identity. |

6. The Scaleup Leapfrog: Designing Without Tech Debt

The silver lining? Small-to-medium scaleups can “leapfrog” these legacy traps. You don’t have 40 years of paper-era data to migrate or a “spaghetti” PL/SQL backbone to untangle.

By designing from scratch with low-cost streaming analytics, you can build this clean separation from Day 1. You can integrate Just-in-Time access where privileges are ephemeral and revoked the moment a workflow completes.

Instead of buying a bloated security suite, you build smart data systems that integrate these layers into the code. This is the core philosophy behind my latest work on GitHub. In the DORA era, the most resilient data is the data that was never disclosed to a human in the first place.

Would you like me to develop a “DORA Compliance Checklist” for your next engineering sprint to ensure your Flink and Agentic pipelines meet these new standards?